Your Flashcard App Doesn't Know You

Mine does. Here's how I built a smarter SRS pipeline for functional Spanish fluency, and what's next.

I’m learning functional Spanish for everyday life.

That means I need to actually speak Spanish. Functional, practical, register-aware Spanish. The kind where you can argue with a landlord, navigate a municipal registration office, and make a friend at a bar.

I've been studying. I have a tutor. I have lesson notes. And for a while I was doing what every digital native does: copying vocab into Anki, running Duolingo streaks, leaving notes to rot in markdown apps.

None of it was working. Not because the tools are bad, exactly. Because they're boring.

They don't know what I know.

They don't know what I care about.

They treat me the same as someone who downloaded the app yesterday.

For someone building real, situated fluency on a deadline, that's not a minor inconvenience. That's the whole problem.

So I built something.

The Insight: Recognition vs. Production

Most flashcard systems get this wrong.

Recognition and production are different skills. They don't transfer to each other the way you'd hope.

If I show you madrugador and ask what it means, that's recognition.

If I say "tell me 'early riser' in Spanish" while you're mid-conversation, that's production.

The research is clear: getting good at recognition does not reliably make you good at production. Production practice builds recognition as a side effect. Recognition practice does almost nothing for production ².

Most flashcard systems conflate them. One card, one direction, one stability score. But the skills are independent. You might be solid on recognizing a word and completely unable to retrieve it when you need it. That gap between passive and active vocabulary is exactly where fluency lives or dies.

The second piece: learning works best at the edge of what you know. Too easy and you're not growing. Too hard and nothing sticks. Psychologists call the productive middle the Zone of Proximal Development, the frontier where things are reachable but not yet consolidated. The best tutors work there instinctively ⁴.

Consumer apps mostly don't, because they don't have a real model of what you know. I want a system that does.

What I Built: The V1 Flashcard Pipeline

The pipeline is simple by design. I built it using n8n, but the tool is less important than the function it serves.

Lesson notes go in via a form. Those notes go through a cheap, legacy model with a structured output schema and a prompt that instructs exhaustive extraction:

- every vocab item

- every phrase

- every grammar pattern

- every fixed expression

The model returns a validated JSON array of card objects. A Code node formats each card into Mochi's markdown structure. An HTTP node pushes them to my Mochi deck, rate-limited to avoid hammering their API.

Notes in. Flashcards out.

Key design decisions

Structured output over hope

I used a JSON schema the model is constrained to follow rather than asking it to return JSON and parsing whatever comes back. That eliminates an entire class of failures.

Format in code, not in the request body

The content string gets assembled in a Code node rather than inside the HTTP request. n8n's JSON body mode doesn't handle real newlines in expression values cleanly. Fix it at the source.

Extract exhaustively, filter at review

The prompt instructs the model to return everything. No selective logic in the extraction step. Spaced repetition surfaces what matters. Missing a card is a worse failure mode than having one that gets quickly retired.

What broke along the way

The OpenAI response came back nested differently than expected and broke the Code node until I inspected the actual output.

The content string had newline serialization issues that took three different approaches to fix.

Mochi rate-limited with a 429 (too many requests, bb) before I set the batch interval in the HTTP node options.

None of it was hard in retrospect. None of it was obvious in the moment. I know more now than I did.

Quick notes on tool selection

I’m generally not dogmatic about tools, though I talk about some because they’re genuinely lightyears ahead of their “peers”. The tools are less important to me than the function they serve.

That said, here’s the stack I built v1 with. Mostly chosen for ease of access, friction reduction, and good usability:

- Orchestration: n8n → Doesn’t penalize you for complexity, can be self-hosted, forward looking

- Flashcards: Mochi → Has an API, affordable, UX doesn’t feel like an MS Paint vision quest

- LLM: Any legacy model that allows structured output, and is multi-lingual + energy/token efficient

- Notes: Anything that I can export in markdown or as a plaintext; digital first for now, image recognition eventually (maybe)

Where This Is Going

This is the foundation, not the destination.

Ultimately, a notes-to-flashcards tool isn’t very special. It might make a good low-code programming assignment for CompSci-adjacent university coursework.

Here’s what’s on the horizon.

Production Cards

The recognition/production problem needs its own workflow: a translator that takes an existing recognition deck and generates a parallel production deck.

I’m excited about building this, because it takes the deck that’s easy to make and turns it into a deck that makes my practice stronger.

I’ve decided to build it as a separate utility because it can run as a background process on existing decks. It’s an extension of the system, not an overhaul of what’s there. This becomes really important once I’m attached to my decks (aka they have so many cards/so much metadata that I’d lose something significant by wiping them for a rebuild).

Separate cards, separate stability curves, tracked independently. I might be solid on one side of a word and fragile on the other. The system should know that.

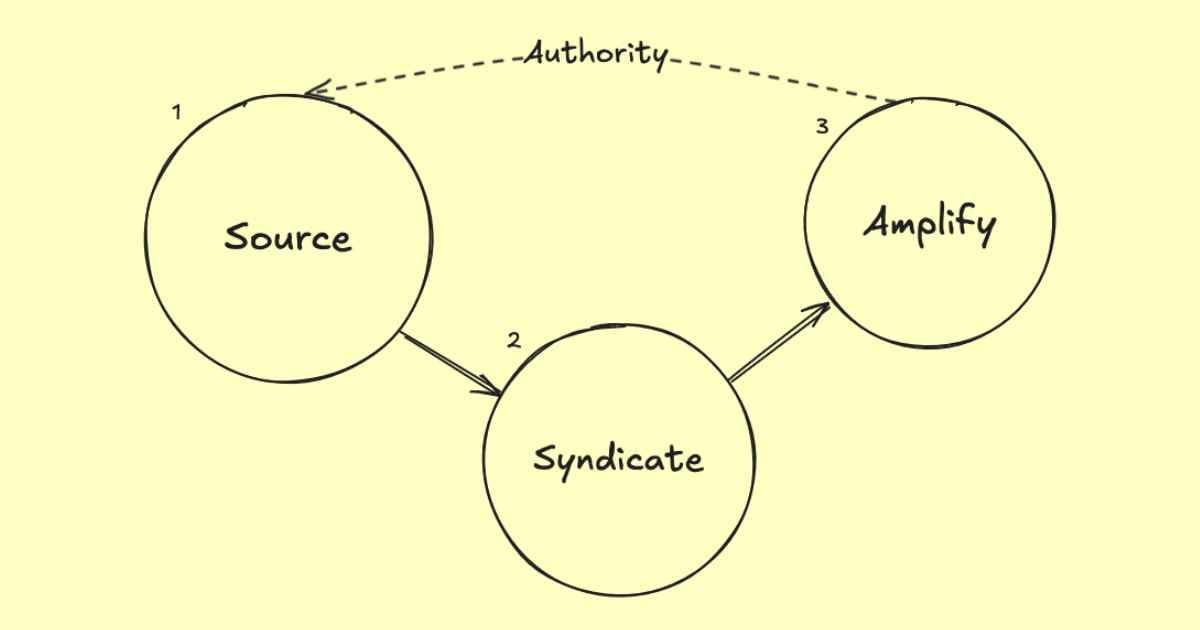

The Learner Brain

After that: a persistent knowledge base. Likely an SQLite database tracking per-item recall scores, exposure counts, stability estimates, and relationships between items. Not just "did you get this right" but "is this consolidated, is this fragile, what's adjacent to what you already know."

ZPD Curriculum Engine

With the brain in place, dynamic curriculum generation becomes possible. A weekly LLM pass that looks at my learner state and identifies what to retire, what to reinforce, and what I’m ready to learn next. A system that actually knows my frontier and what I care about.

Immersion Capture

The thing I'll need most on the ground: one tap on my phone to send a word I just heard, a phrase from a menu, something I didn't understand, directly into the pipeline. Processed asynchronously, cards waiting in Mochi the next time I open the app⁵.

The Bigger Point

Consumer learning apps are built for the median learner with the median goal. And systems built to an average of averages fits nobody well, just like airplane seats or all-in-one soap.

When my goal is situated, a real trip, a real deadline, a real social environment, the median tool is the wrong tool.

The stack I used isn’t exotic:

- n8n for orchestration

- 4o for extraction

- Mochi for review

- Markdown for everything else

It took me one session to build. The harder part was knowing what to build and why, having a clear enough model of how learning works to design something that respects it.

Early bet: the above will be a recurring theme (problem if misaligned, boon if well understood) as people can build faster using emerging and disruptive tools. If you’re pointed in the wrong direction, going 100x faster gets you to the wrong place sooner. Good, but superseded by someone who was pointed in the right direction before juicing the engine with rocket fuel.

This build is one piece of a larger effort I’m starting called skillmesh: personal learning infrastructure built as composable microservices.

The flashcard pipeline is the first. The learner brain is next. Eventually a curriculum engine that works across subjects and input methods frictionless enough that capture becomes habit.

The goal is a system that learns how I learn.

V1 is live. Cards are in Mochi. Repo is initialized.

More soon.

Ōs,

– Jamey

Glossary

Spaced Repetition (SRS) → A learning technique that schedules review of material at increasing intervals based on how well you know it. Items you struggle with appear more frequently; items you've consolidated appear less often.

Zone of Proximal Development (ZPD) → A concept from developmental psychology describing the space between what a learner can do independently and what they can do with support. Effective instruction targets this zone: challenging enough to produce growth, accessible enough to produce success.

Recognition → The ability to understand a word or phrase when you encounter it. Passive knowledge. You see madrugador and know it means "early riser."

Production → The ability to retrieve and use a word or phrase when you need it. Active knowledge. You want to say "early riser" and can surface madrugador without a prompt.

Stability → In spaced repetition systems, a per-item score representing how consolidated a piece of knowledge is. High stability means the item is well-learned and doesn't need frequent review.

Immersion Capture → The practice of logging language encountered in real-world contexts (menus, conversations, signage) for later processing and review.

Composable Microservices → An architectural pattern where a system is built from small, independent components that each do one thing well and can be connected, extended, or replaced without rebuilding the whole. Loosely bounded simple systems as design.

Skillmesh → A personal learning infrastructure project built as a stack of composable microservices. The flashcard pipeline is the first component.

References

- Krashen, S. D. (1982). Principles and Practice in Second Language Acquisition. Pergamon Press.

Foundation for comprehensible input theory and the acquisition/learning distinction. - Laufer, B., & Goldstein, Z. (2004). Testing vocabulary knowledge: Size, strength, and computer adaptiveness. Language Learning, 54(3), 399-436.

Direct research on the gap between receptive and productive vocabulary knowledge and asymmetric transfer. - Nation, I. S. P. (2001). Learning Vocabulary in Another Language. Cambridge University Press.

Comprehensive treatment of productive vs. receptive knowledge distinction. - Vygotsky, L. S. (1978). Mind in Society: The Development of Higher Psychological Processes. Harvard University Press

Original source for Zone of Proximal Development. - Cepeda, N. J., Pashler, H., Vul, E., Wixted, J. T., & Rohrer, D. (2006). Distributed practice in verbal recall tasks: A review and quantitative synthesis. Psychological Bulletin, 132(3), 354-380.

Spaced repetition research underpinning the SRS approach.

Discussion